This week in AI | Week 2

This week in the field of artificial intelligence, Google revealed Gemma, HuggingFace introduction to Cosmopedia. Additionally, Amazon announced that open-source, high-performing Mistral models will soon be accessible on Amazon Bedrock. By bringing such powerful AI to more users via cloud-based services, new applications may emerge. These advancements show how quickly AI is developing; in certain areas, systems are already capable of doing some tasks that humans could not perform. As a result, the frontiers of what is possible are being redefined at a rapid rate. There are exciting times ahead as the AI community is coming up with new and seemingly unimaginable achievements every week.

Google introduced Gemma, marking a pivotal moment in the democratisation of AI technology. Derived from the same technological lineage as the acclaimed Gemini models, Gemma is designed to foster responsible AI development, offering a suite of lightweight, state-of-the-art open models that promise to revolutionise how developers and researchers build and innovate.

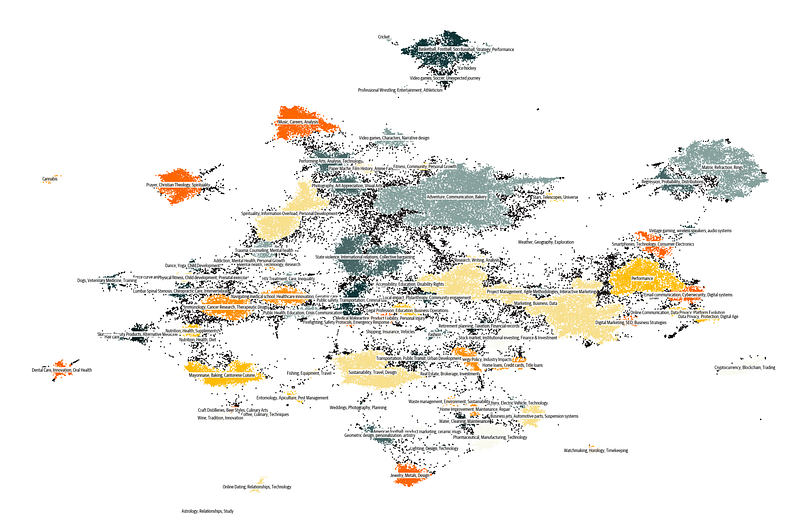

Hugging Face has recently unveiled Cosmopedia v0.1, the largest open synthetic dataset, consisting of over 30 million samples generated by Mixtral 7b. This dataset, comprising various content types such as textbooks, blog posts, stories, and WikiHow articles, totals an impressive 25 billion tokens.

Cosmopedia v0.1 stands out as a gigantic attempt to assemble world knowledge by mapping data from web datasets such as RefinedWeb and RedPajama. It is divided into eight distinct splits, each created from a different seed sample, and covers a wide range of topics, appealing to a variety of interests and inclinations.

Hugging Face includes code snippets for loading certain dataset splits to make it easier to use. A smaller subset, Cosmopedia-100k, is also available for individuals looking for a more easily maintained dataset. The development of Cosmo-1B, a larger model trained on Cosmopedia, demonstrates the dataset’s scalability and adaptability.

Cosmopedia was designed with the goal of maximising diversity while minimising redundancy. Through targeted prompt styles and audiences, continual prompt refining, and the use of MinHash deduplication algorithms, the dataset achieves a remarkable breadth of coverage and originality in content.

In another exciting development, Mistral AI, a France-based AI company known for its fast and secure large language models (LLMs), is set to make its models available on Amazon Bedrock. Mistral AI will join as the 7th foundation model provider on Amazon Bedrock, alongside leading AI companies.

Mistral AI models are set to be publicly available on Amazon Bedrock soon, promising to provide developers and researchers with more tools to innovate and scale their generative AI applications.

This week’s developments highlight a huge drive for more open, accessible, and responsible AI technologies. The AI community continues to push for inclusive, diverse, and ethically grounded innovation, with Google’s Gemma offering cutting-edge models for responsible development, Hugging Face’s Cosmopedia expanding the scope of synthetic data research, and Mistral AI’s strategic move to Amazon Bedrock.

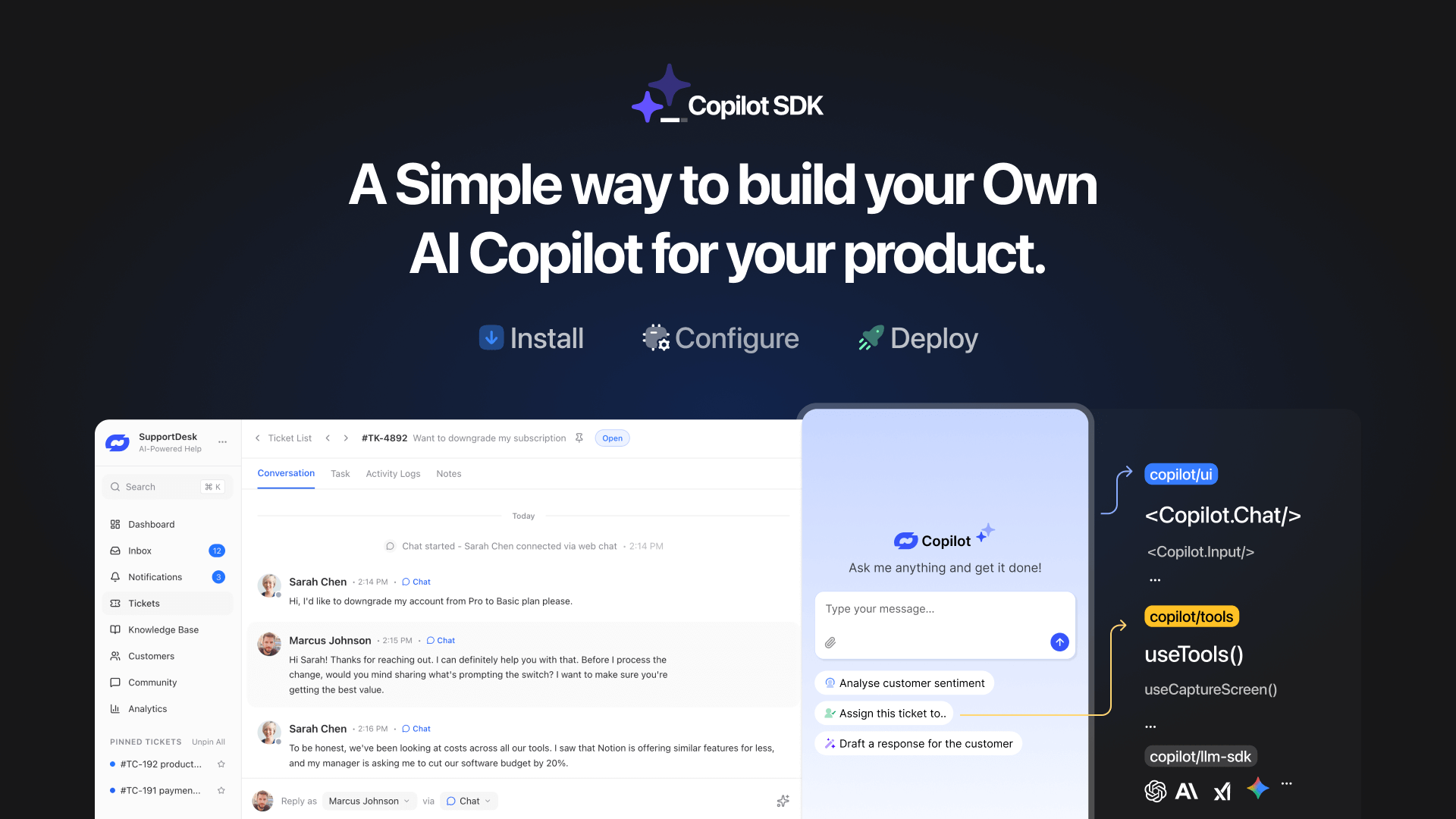

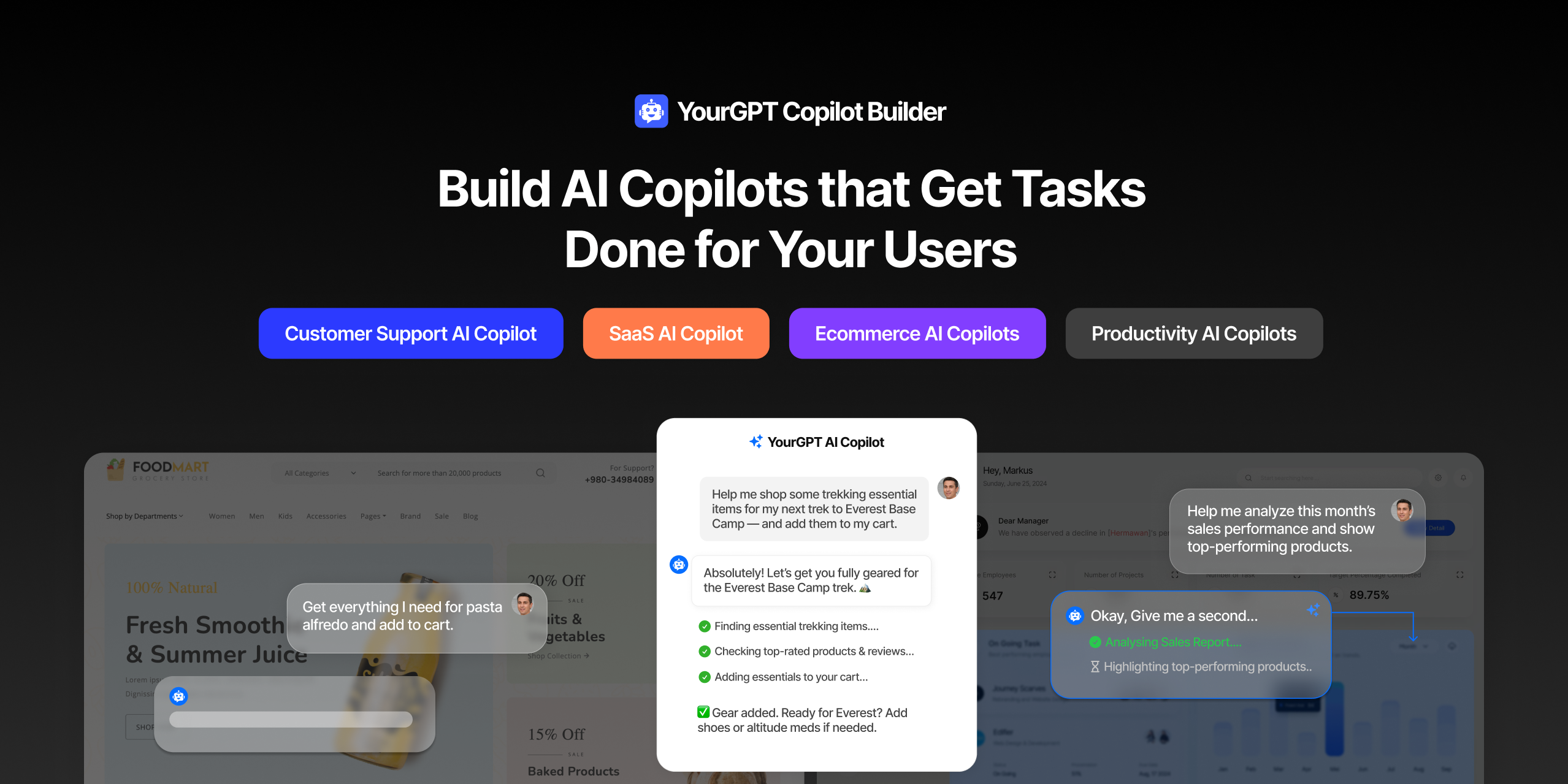

TL;DR YourGPT Copilot SDK is an open-source SDK for building AI agents that understand application state and can take real actions inside your product. Instead of isolated chat widgets, these agents are connected to your product, understand what users are doing, and have full context. This allows teams to build AI that executes tasks directly […]

Businesses today expect AI to do more than answer questions. They need systems that understand context, act on information, and support real workflows across customer support, sales, and operations. YourGPT is built as an advanced AI system that reasons through tasks and keeps context connected across every interaction. This intelligence sits inside a complete platform […]

AI can help you finds products but doesn’t add them to cart. It locates account settings but doesn’t update them. It checks appointment availability but doesn’t book the slot. It answers questions about data but doesn’t run the query. Every time, the same pattern: it tells you what to do, then waits for you to […]

GPT-driven Telegram bots are gaining popularity as Telegram itself has 950 million users worldwide. These AI Telegram bots allows you to create custom bots that can automate common tasks and improve user interactions. This guide will show you how to create a Telegram bot using GPT-based models. You’ll learn how to integrate GPT into your […]

TL;DR The 10 best no-code AI chatbot builders for 2026 help businesses launch quickly and scale without developers. YourGPT ranks first for automation, multilingual chat, and integrations. CustomGPT and Chatbase are ideal for data-trained bots, while SiteGPT and ChatSimple focus on easy setup. Other options like Dante AI, DocsBot, and Botsonic specialize in workflows and […]

GPT Chatbot for Webflow: The Key to Exceptional Customer Service Providing great customer service is essential for any business, but managing a high volume of inquiries can be a challenge.If you use Webflow, integrating a webflow chatgpt can simplify this process. This AI-powered webflow chatbot offers consistent, personalised responses to customer queries, helping you manage […]