From Reactive to Autonomous: The Evolution of Customer Support (2016–2026)

In the last ten years, customer service has changed more than it did in the twenty years before that.

For much of that earlier period, support was slow and often frustrating. People waited hours or days for a reply, repeated the same details across channels, and dealt with systems that were not very good at understanding what they needed. The tools improved over time, but the overall experience changed much more slowly.

Then AI raised the bar. Systems like ChatGPT made it easier for millions of people to experience faster and more flexible conversations firsthand. That changed what people expected from customer support.

This blog post covers, how that shift happened: the forces that kept pushing customer support AI forward, the moments that changed the pace of the industry, and how the field moved from reactive service toward autonomous resolution.

Other eras had their turning points. Telephone support took years to become standard. Email support took years to spread through the 1990s. The shift from rule-based bots to systems that could handle language much more flexibly happened much faster.

What companies were running in 2018 and what was possible by 2023 were not just different points on the same curve. They were very different support experiences.

The speed mattered, but so did the timing. Technology improved at the same time that customer expectations were rising and manual support costs were becoming harder to control. There was no long adjustment period between one shift and the next.

Inside companies, the pressure was already there. Leaders were being asked to do more with the same team size or less. What changed was that the gap between what customers expected and what older tools could deliver became much easier to see.

Customers were expecting fluid and context-aware interactions, and still getting queue times, repeated verification, and rigid workflows.

The companies that responded well were not only the ones investing in technology. They were the ones that recognized all three pressures moving at once and acted before the gap became too large.

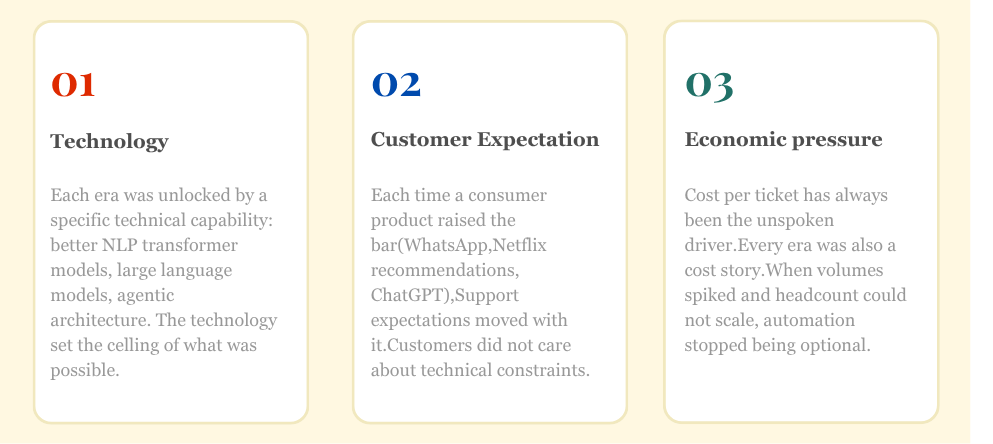

Technology mattered a lot, but it was not the only thing moving underneath these shifts.

Every major shift in support this decade came from three pressures converging at once. What changed was not just the technology itself, but the interaction between improving technical capability, rising customer expectation, and the growing cost of continuing to run support manually.

These forces never moved at the same speed. In each phase of the decade, one usually supplied more of the momentum than the others. That difference is what gave each era its particular shape, and it is the key to understanding what followed.

Most of the change across this decade happened slowly, then all at once. These are the five specific moments that drew the lines. Each one is listed here with its immediate consequence. The longer story of what businesses did in response to each, how they adapted, what they got wrong, and what they got right, is covered in the era breakdown that follows.

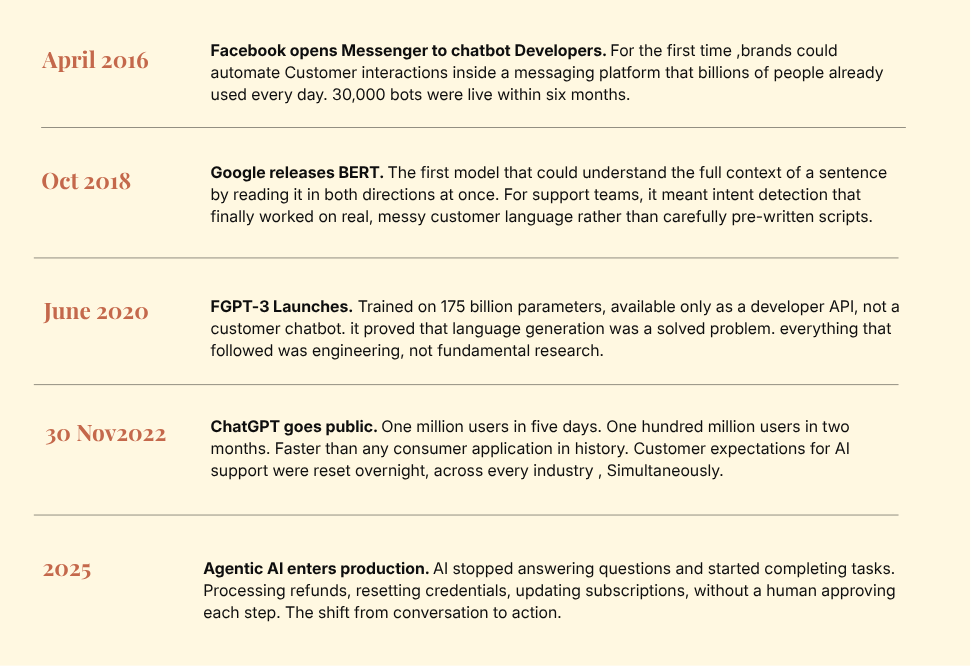

When Facebook opened Messenger to businesses, the real shift was not how sophisticated the bots were. It was distribution. Messenger already had 900 million monthly active users, so brands no longer had to persuade customers to download a new app or visit a separate support page.

That made Messenger a direct bridge between businesses and customers. Support moved into an app people were already using every day, giving chatbots distribution at a scale they had never had before.

Within six months, 30,000 bots were active on Messenger, growing to 100,000 by April 2017. The failures that followed were just as important: dead ends, no handoff to a human, and no connection to real business data. Those early mistakes became the blueprint for what later systems had to fix.

Before BERT, most support bots worked well only when customers used familiar wording. If the same request was phrased in a different way, the system often missed the meaning or gave the wrong response.

BERT improved how systems understood language in context. Instead of depending so heavily on fixed rules or a small set of expected phrases, companies could train models on many different patterns of language and get better results when customers said things in less predictable ways. That made tasks like intent detection, routing, and text classification more reliable in real support settings.

It did not transform customer support overnight. But it was a clear step forward. The technology underneath support was getting better at understanding what customers meant, not just spotting words it had seen before.

It also helped shape what came next. The broader direction became clearer: train models on broad amounts of text, then adapt them for specific uses. Later models pushed that further, and by 2020 GPT-3 showed that the field was moving beyond better understanding and toward much stronger language generation as well.

GPT-3 arrived in June 2020 as a developer API with 175 billion parameters, and almost nobody outside of machine learning circles noticed at the time. It was not a consumer product. There was no interface to log into. You had to apply for API access and write code to use it.

GPT-3 showed that these systems were starting to do something different. Earlier progress had made AI better at understanding text. GPT-3 offered an early glimpse of a model that could also generate long, fluent responses in a way that felt much more natural and flexible than what most support systems could produce at the time.

ChatGPT changed the customer support landscape by changing how people thought about AI. Before that, many AI interactions still felt narrow, inconsistent, or limited to specific tasks.

ChatGPT showed a more capable kind of interface. It could handle follow-up questions, keep track of context across a conversation, and respond in a way that felt more natural than what most people had seen before.

That change carried over into customer support. Once people had used a system like that, they were less patient with digital experiences that felt rigid or easily confused. The comparison was not always fair, but it changed expectations anyway.

The first four moments were mostly about improving understanding: better intent detection, better language comprehension, and more natural responses. The shift in customer service is different. It is about what happens after the system understands the request.

Earlier systems could explain what was happening. Agentic AI can carry out the work itself. In the case of a refund, that might mean checking the account, looking at the transaction, applying the credit, updating the record, and sending the confirmation without handing the case to a person.

That is what makes this stage different. The system is not only answering questions or suggesting the next step. In some cases, it is completing the task from start to finish across the tools and systems behind the support experience.

The change is real, but it is still uneven. Companies using agentic AI in production today tend to limit it to high-volume, well-defined issues where the rules are clear. The wider opportunity is obvious, but most businesses are still working through the harder part: making sure the data, controls, and policies are good enough for this to be used safely.

Between 2016 and 2026, customer support passed through five phases that look nothing like each other. Each one is covered below, including what businesses actually did in response to each shift, what worked, what failed, and why.

April 2016 felt like a starting pistol. Facebook opened Messenger to developers and brands moved fast. The pitch was compelling: always-on support, instant responses, no queues, no salary costs. Companies that had never considered chatbots had one live by the end of that quarter.

Facebook’s Messenger platform had 30,000 active chatbots within its first six months after opening to developers in April 2016. By September 2017, that number had grown to over 100,000. A figure often cited as “10,000 bots in two months” appears in multiple sources but is not accurate.

The honest answer to what those bots could do is: not much. These were decision trees dressed up with a conversational interface. If a customer typed the exact phrase the bot expected, it worked. If they typed anything slightly different, it failed. There was no understanding happening, just pattern matching against a finite list of pre-written responses.

Three structural problems made this worse than it needed to be. The bots had no memory between sessions, so every conversation started cold. They had no access to business systems, so they could not look up an actual order or process a real request. And there was often no escalation path to a human agent when the bot hit a dead end, which it did constantly.

“We got really overhyped, very quickly. Developers were given only a couple of weeks before launch, which wasn’t nearly enough to build quality bots.”

By 2017, a backlash had set in. The industry had learned that automation without intelligence was not a shortcut. It was a different kind of frustration delivered at higher speed. The specific design mistakes made at scale during this period became the checklist that the next generation of tools was built to avoid.

What 2016 and 2017 made clear was that fully automated support was still limited. That pushed the industry toward a more practical question: what if AI did not replace support agents, but made them more capable? That shift in thinking shaped 2018 and 2019 and quietly laid the groundwork for what came next.

BERT (Bidirectional Encoder Representations from Transformers) was the first model that understood the full context of words in a sentence by reading text simultaneously from both directions. It could handle intent detection, sentiment analysis, named entity recognition, and ticket routing from a single pre-trained model. It was described at the time as the “Swiss army knife” of NLP tasks. Google Duplex, sometimes cited as the NLP milestone of this era, was a limited restaurant-booking demonstration. BERT was the real shift for language understanding in production systems.

During this period, language technology was getting noticeably better, and support teams were starting to use it in more practical ways.

The impact showed up less in customer-facing bots and more. Bots started helping teams reply faster for routine inquiries, classify tickets, and spot urgency quickly.

At the same time, cloud CRM systems were becoming more common, which meant customer data was easier to access and use inside these tools. Better language systems and better access to structured business data came together in 2018 and 2019. That was the period when much of the foundation for modern AI support started to take shape.

Two things happened in 2020 that permanently changed the trajectory of customer support. GPT-3 launched in June, and the world went into lockdown a few months before that. They were unrelated events that amplified each other in ways nobody planned for.

GPT-3 was released by OpenAI in June 2020 with 175 billion parameters. It was available only as a developer API, not a consumer product. This distinction matters: GPT-3 was not a chatbot. It was a research and development tool that proved language generation was no longer a fundamental barrier. This point gets blurred in a lot of retrospective writing on this period, and it changes how you interpret the 2022 ChatGPT moment.

COVID-19 did not introduce AI to customer support. It forced companies to scale what they already had, far faster than anyone was comfortable with. Physical service centres closed. Call volumes surged in sectors like healthcare, financial services, and retail by hundreds of percentage points. Hiring was frozen. The businesses that had invested in automation infrastructure during 2018 to 2019 had something to fall back on. The ones that had not were exposed immediately.

The pandemic also permanently shifted customer behaviour in ways that outlasted the crisis. People who had never used live chat tried it out of necessity, found it faster than phone support, and did not go back. This created lasting demand for digital-first support channels that companies now had to staff and maintain properly.

The defining technical capability of this era was multi-turn context. Earlier chatbots treated every message as a standalone input. By 2021, AI systems could hold the thread across an entire conversation, remembering that a customer mentioned their order number four exchanges ago or that they had already tried the suggested fix. That sounds like a small improvement. In practice it was the difference between a bot that felt like a real conversation and one that felt like submitting a form with extra friction.

On November 30, 2022, OpenAI released ChatGPT to the public. What followed was unlike anything the technology industry had produced before in terms of raw consumer adoption speed.

ChatGPT reached one million users within five days of its November 30, 2022 launch. It hit 100 million monthly active users within two months. TikTok took nine months to reach that milestone. Instagram took two and a half years. By early 2025, ChatGPT had 700 million active weekly users, with over 80% of Fortune 500 companies having integrated it into their workflows within nine months of launch.

LLMs solved the core problem that had limited every previous generation of support automation: genuine language understanding. Not keyword matching. Real comprehension of ambiguous phrasing, typos, context-dependent meaning, and implicit requests. A customer typing “my thing still hasn’t shown up” could now be understood correctly rather than returning a “sorry, I don’t understand” error.

But understanding language and delivering reliable customer service are two different problems. LLMs introduced hallucination. A model capable of understanding any question is also capable of confidently producing a plausible-sounding but entirely incorrect answer. Telling a customer their refund had been processed when it had not. Citing a returns policy that did not exist. In early deployments, this was not an edge case. It happened regularly.

The industry’s practical answer was Retrieval-Augmented Generation, known as RAG. Rather than letting an LLM generate freely from its training data, RAG pipelines retrieve specific, verified documents from a company’s own knowledge base and instruct the model to answer using only that context. Order records, policy pages, CRM history. The model provides language capability. The retrieved documents provide the accuracy constraint.

The current era is defined not by improved language understanding but by action. Previous generations of AI customer support talked. The current generation does the work.

An autonomous AI agent in 2026 can check an order status, process a standard refund within policy limits, update a subscription plan, reset account credentials, create a follow-up task with full context, and send a confirmation email. All within a single customer interaction, without a human involved at any step.

In businesses with mature agentic deployments: customer identity verification, order lookup and status, standard refunds and credits within policy limits, subscription and billing changes, credential resets, and post-resolution follow-up. What they still do poorly: situations requiring negotiation outside defined policy boundaries, high-stress emotional interactions where tone matters significantly, and genuinely novel problem types not represented in training or knowledge base data.

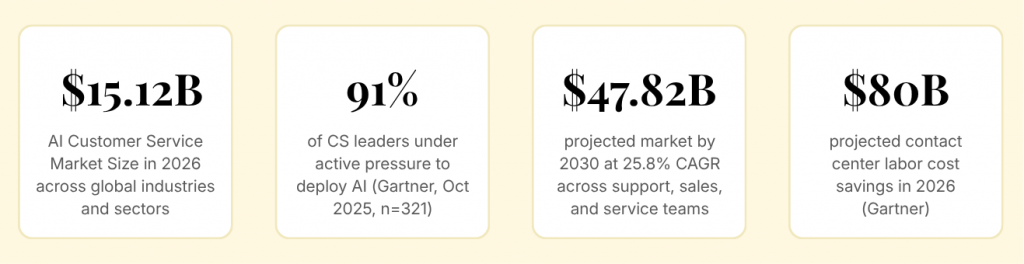

The pressure to deploy is real. A Gartner survey of 321 customer service leaders in October 2025 found that 91% were under active pressure to implement AI in 2026. Pressure and readiness are not the same thing, and the gap between them is where most underperforming deployments live.

The technology is no longer the problem. Organizational readiness is. Agentic AI is smart enough now to handle real end-to-end resolution on a large scale.

All the progress, and one thing has stayed stubbornly in place. Customers are fine with AI handling the simple stuff. When something genuinely goes wrong, they want a person.

75% of customer inquiries can now be resolved by AI tools without human intervention. But the situations where people still want a human have not shrunk as AI improved. They have become more clearly defined.

The businesses that will lead customer support by 2029 are not the ones buying the most advanced tools. They are the ones that have done the unglamorous work: clean data, documented policies, reliable escalation paths, and a clear view of where human judgment still matters.

The technology is no longer the constraint. Organizational readiness is.

That said, three shifts are coming that no amount of readiness can delay.

Proactive support becomes the default. The reactive model, where support begins when a customer notices a problem and reaches out, is already breaking down at the edges. Companies with mature data infrastructure are detecting failed payments, delayed deliveries, and account anomalies before the customer contacts them. By 2029, waiting for customers to raise issues will feel as outdated as email ticketing felt in 2022.

Voice AI will grow alongside text. Most AI investment in support over the last four years has gone into chat and messaging. Voice has lagged. That gap is closing faster than most people in the industry are acknowledging.

The next wave will not be businesses choosing between voice and text. It will be businesses offering both with the same context, the same resolution capability, and no degradation in quality based on the channel a customer chooses.

Governance becomes impossible to ignore. As AI takes more autonomous actions on customer accounts, accountability moves from theoretical to urgent. If an agent processes the wrong refund, changes the wrong subscription, or sends the wrong confirmation, someone has to be responsible, and there has to be a record.

The companies building audit trails and human oversight into their agentic deployments now will not just be safer. They will be the ones customers and regulators trust when the ones that skipped that step start making headlines.

Multi-agent systems will become more common. A single AI system will not handle everything well. More companies will move toward setups where different agents handle different parts of the work, such as identity checks, policy lookup, resolution steps, or follow-up. The challenge will be coordination, not just capability.

What these three shifts have in common is that none of them are primarily technology problems. Proactive support requires data discipline. Voice consistency requires infrastructure investment. Governance requires organizational will. The businesses that treat AI deployment as a technical project will hit all three of these walls. The ones that treat it as an operational transformation will not.

April 2016 marked the start when Facebook opened Messenger to developers. What followed were decision-tree bots that only worked if users typed exact phrases. There was no memory, no system access, and no escalation path. The technology existed, but it wasn’t useful.

A chatbot explains how to solve a problem. An autonomous agent actually solves it. It can find transactions, apply refunds, and confirm actions without human involvement. The difference is between describing resolution and delivering it.

They failed for three consistent reasons: no memory, no integration with business systems, and no escalation path. These gaps left customers stuck, and the same mistakes still appear in modern deployments.

ChatGPT reset expectations. After experiencing it, customers found traditional chatbots unacceptable. It introduced contextual understanding and flexible conversation, raising the standard overnight.

RAG (Retrieval-Augmented Generation) ensures AI responses are grounded in verified company data instead of generating guesses. This prevents incorrect answers and makes AI reliable in real support environments.

No. AI handles routine queries, while humans manage complex and high-stakes issues. The most effective approach is a hybrid model where both work together.

Readiness depends on having accurate data, clearly documented policies, and a reliable escalation path to humans. Without these, deployments often fail.

The 80% figure refers to a 2029 forecast, not current performance. Today, results vary widely depending on implementation quality and data readiness.

Multi-turn context allows AI to remember previous messages, eliminating repetitive inputs and making conversations feel continuous and natural.

Focus on high-quality data, seamless human escalation, and supporting agents handling complex cases. These factors determine whether AI deployments succeed.

The decade between 2016 and 2026 did not just add new tools to customer support. It changed what support is supposed to do. The bar moved from deflecting to resolving, from reacting to anticipating, from handling volume to handling it well.

What separated the companies that kept up from the ones that fell behind was never just the technology they bought. It was whether they had built the foundation to use it: clean data, connected systems, clear escalation paths, and a genuine understanding of where human judgment still belongs in the process.

That work is harder than buying a platform. But it is the only part that compounds.

Most AI deployed in support today still stops at the answer. It understands the question, generates a response, and hands the burden back to the customer or the agent. The resolution still requires someone else to act. That gap, between understanding and doing, is exactly what the next phase of support has to close.

YourGPT is built for that gap. Not just to answer, but to resolve completely. Connected to the systems that hold your customer data, trained on your policies, and designed to take action end to end without passing the work back. The businesses that will lead support in 2029 are building that capability now.

The race is not to full automation. It is to reliable automation. That is the only destination worth building toward, and it is the one we are building for.

Most AI stops at answering the customer. YourGPT goes further. It connects with your CRM, billing system, and backend tools to perform multi step action, so your team can focus on the work that actually needs a human.

TL;DR Dental clinics often lose patients not due to treatment quality but because of slow or missed responses across calls, chats, and after-hours enquiries. AI agents help by responding instantly, collecting structured patient details, applying booking rules, and routing requests before they reach the front desk. Clinics that define clear workflows, set boundaries around clinical […]

TL;DR The best Shopify AI support agent is not defined by demos, but by how it performs under real customer scenarios with accurate, source-backed answers and clear boundaries. Reliable systems depend on strong knowledge grounding, retrieval of live store data, controlled permissions, and structured escalation, not just model quality or response fluency. Platforms like YourGPT […]

TL;DR AI improves speed, but real ROI appears when workflows no longer depend on a human queue and can be completed end to end. Autonomous agents shift cost structure by removing routine work from human flow, reducing cost per case, improving response time, and scaling capacity without linear hiring. Platforms like YourGPT help operationalize this […]

AI becomes far more useful when it can do more than answer questions. That is where autonomous AI agents stand apart. Instead of stopping at conversation, they can understand a goal, decide what needs to happen next, take action, and improve over time through real interactions. They are not fully independent. You still define the […]

TL;DR Agentic AI in customer support refers to autonomous AI systems that understands a customer’s intent, build the required service workflow, and execute actions across connected enterprise systems to deliver a completed resolution within a single interaction. Unlike chatbots that generate answers and route tickets, agentic AI acts: the refund is issued, the subscription is […]

Every AI agent looks impressive in a demo. The real test begins after launch. Within days, things can go wrong. The agent may give incorrect policy information, trigger unintended actions, or rely on outdated data. These are not edge cases. They are common failure patterns in real deployments. There is a clear gap between adoption […]