AI Agent ROI: How to Measure Business Value and Returns

AI improves speed, but real ROI appears when workflows no longer depend on a human queue and can be completed end to end.

Autonomous agents shift cost structure by removing routine work from human flow, reducing cost per case, improving response time, and scaling capacity without linear hiring.

Platforms like YourGPT help operationalize this by turning defined workflows into outcome-driven systems across support, sales, and operations, enabling teams to move from assistance to execution.

Most AI business cases fail in finance reviews for the same reason: they measure the wrong thing.

They count hours saved. They multiply reclaimed minutes by headcount, dress the number in a spreadsheet, and call it ROI. The projection looks clean. The demo looks convincing. Then the next quarter arrives and the savings are somehow still pending.

The problem is not the math. It is the model.

There is a real economic difference between AI that helps a person work faster and AI that completes a workflow without a person in the loop at all. One improves labor efficiency. The other changes what labor is required. Conflating the two, which most vendor pitches and internal business cases do, produces projections that are structurally optimistic.

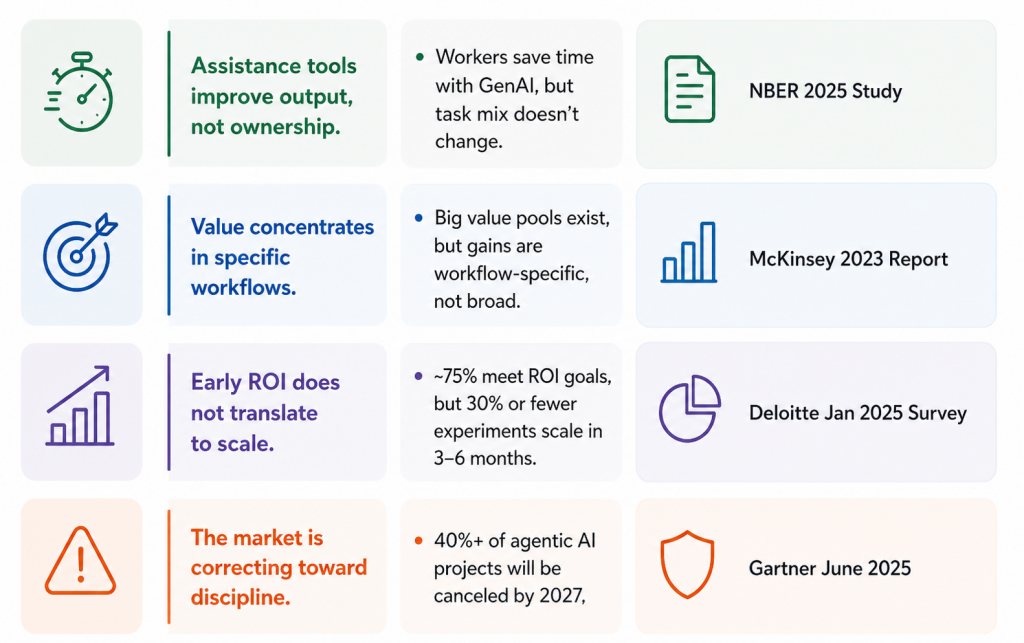

The evidence for this gap is already in the field. An NBER study across 66 firms and 7,137 knowledge workers found that employees using a generative AI assistant saved roughly two hours a week on email, yet their overall task mix did not change. That is exactly what assistance does. It smooths the workday. It does not rewrite the operating model.

Autonomy is a different claim entirely. It begins the moment a unit of work no longer has to land in a human queue. When an agent takes a workflow from intake to completion, staying inside policy and escalating only genuine exceptions, the cost curve changes character. You stop paying full human labor for every unit of output.

In this blog, we break down where that line actually sits, which workflows cross it, and what an honest ROI calculation looks like when you get the model right.

The distinction shows up at the point where work either stays with a person or leaves the queue entirely.

Time savings apply when a human still owns the task. In that model, AI functions as an assistant. It reduces effort per task, improves speed, and increases throughput. The evidence supports this. In customer support, AI guidance has delivered measurable productivity gains, especially for less experienced agents. But the structure of the work remains unchanged. The task still enters a human queue, a person still has to complete it, and the cost of labor remains tied to every unit of output. This is efficiency within the same system, not a change to the system itself.

Autonomous agents operate on a different model. They do not just make a person faster. They remove the need for a person in the workflow, at least for the repeatable portion of the work. When that happens, the economics shift in ways time savings alone cannot explain:

This is the line most teams miss. They evaluate both models using the same ROI lens and assume the outcomes are comparable. They are not.

Only one of these changes the cost structure.

An agent is not defined by how well it can respond. It is defined by whether it can complete the work.

From a financial perspective, the distinction is simple. If a task still returns to a human for execution, validation, or correction, the system is acting as an assistant. The economics have not changed.

An autonomous agent only becomes meaningful when it can take a workflow from intake to completion within defined boundaries. That requires a full chain of capabilities:

Most systems can demonstrate parts of this. Few can do all of it reliably in production.

The failure point is not language generation. It is completion integrity.

When a dependency fails, a record is incomplete, or a rule does not apply cleanly, weaker systems still produce a plausible response. But the task is not finished.

That gap is not cosmetic. It determines whether the workflow has actually left the human queue.

This is why verification and escalation logic matter more than response quality. They decide whether the system can own the outcome, not just assist with the process.

Without that, you do not have an autonomous agent. You have a faster interface on top of the same work.

Autonomous agents only matter when they change the unit economics of a workflow. Not when they make a step faster, but when they remove the need for that step to enter a human queue at all.

That difference is structural:

| AI Assistant | Autonomous Agent | |

|---|---|---|

| What improves | A step inside the workflow | The workflow itself |

| Human still owns the task? | Yes | Only exceptions |

| Economic effect | Faster throughput, same headcount | Lower cost per case, faster cycle time, scalable capacity |

Queue removal creates forms of value that a time-saved calculation will never capture.

1. Cost detachment: When a system handles a workflow end to end, the business stops paying full human labor for every unit of output. Cost shifts from headcount to infrastructure. For high-volume, repetitive work, this produces a lower and more predictable cost curve.

2. Speed with revenue consequences: Faster response is not just a service metric. It changes outcomes. In sales, lead response time affects conversion. In support, faster resolution reduces repeat contacts. In finance, faster processing improves cash visibility. Time directly translates into revenue and cost, not just productivity.

Concentration of human judgment: A well-scoped deployment does not remove human work. It reshapes it. Routine cases are handled by the system, while people focus on exceptions: disputes, ambiguous requests, edge cases, and relationship-sensitive moments. This increases the value of human attention instead of spreading it across repetitive tasks.

Together, these shifts define the difference between efficiency and structural change.

The question is not whether the system is impressive. It is whether anything has actually left the queue.

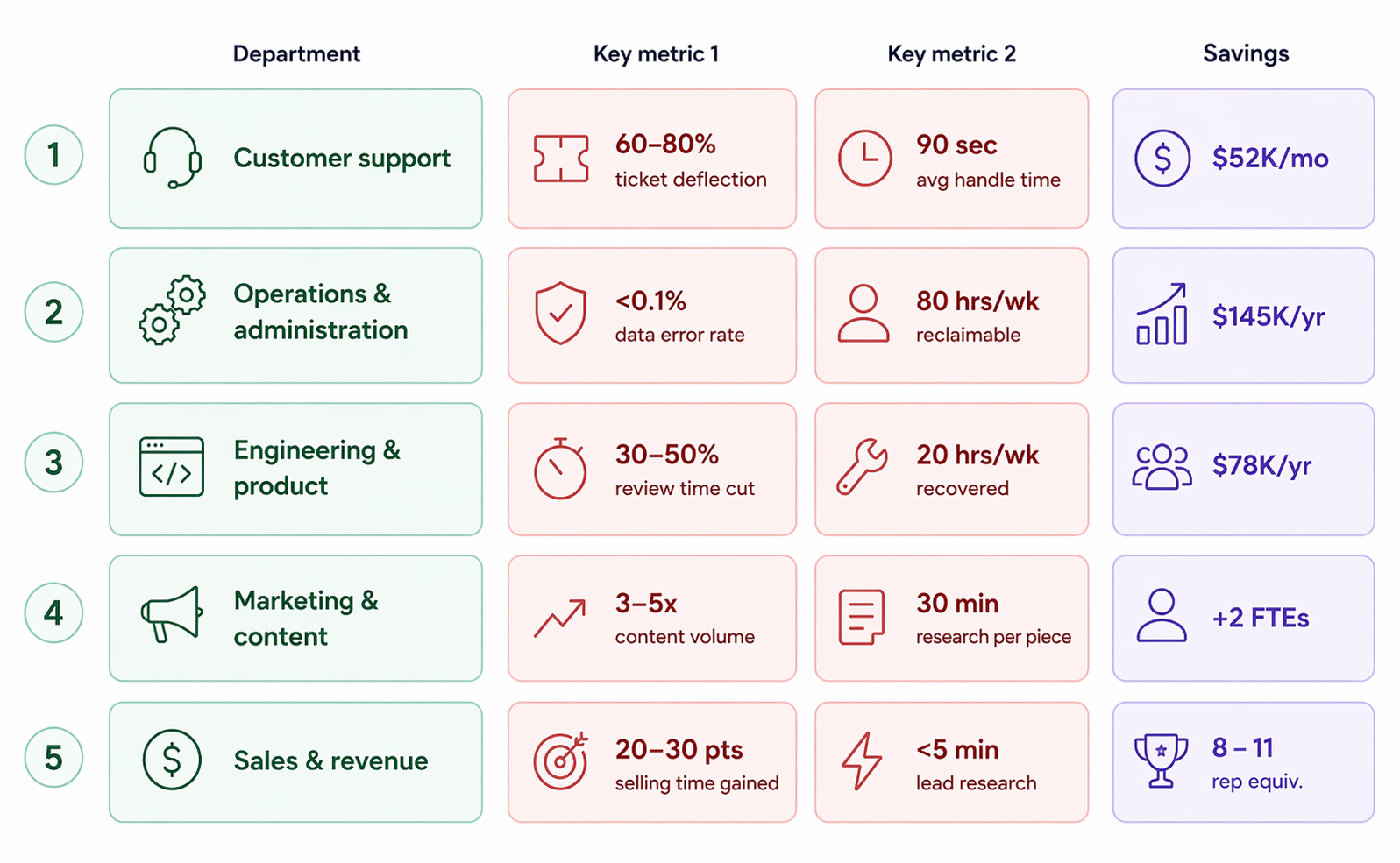

Returns do not show up evenly across the business. They concentrate in workflows that share the same traits: high volume, clear rules, structured inputs, and measurable outcomes. These are the environments where work can leave the human queue, and where the economics become visible.

Support is the clearest starting point because the unit economics are already visible. Teams track ticket volume, handling time, cost per resolution, escalation rate, and backlog. That makes it easy to see whether work is still flowing through a human queue or being completed autonomously.

When workflows are well-scoped, agents move from assisting responses to resolving cases. That is where cost per ticket drops and response time compresses at the same time.

Finance ops, procurement, claims intake, onboarding, and document workflows are often stronger candidates than customer-facing work. Inputs are structured, rules are explicit, and outcomes are clearly defined.

In these systems, “done” is binary. A request is approved, routed, matched, or flagged. That clarity allows agents to operate reliably and makes economic impact easier to measure. The advantage here is consistency, not visibility.

The near-term value is not in replacing engineering judgment. It is in removing recurring friction.

Issue triage, regression runs, dependency checks, documentation updates, and environment validation consume time but do not require deep creativity. Removing this interrupt work frees high-cost engineering attention for tasks that actually require it.

The strongest use cases are not creative outputs. They are the repetitive layers around them.

Gathering inputs, assembling briefs, summarizing performance, and preparing research consume skilled time without requiring much judgment. Agents shift this work out of the queue so teams spend more time deciding and less time preparing.

Speed directly affects revenue. Lead qualification, routing, enrichment, and initial response are all time-sensitive and repeatable.

When these workflows leave the queue, response time drops to minutes or seconds. That changes pipeline velocity without requiring linear headcount growth.

If you want a business case that survives contact with finance, stop leading with reclaimed hours and start with completed workflow economics.

Use this:

Each term matters.

This is the core number for autonomous workflows.

If the agent resolves 80,000 routine requests a year and the human fully loaded cost per completed request is $6.20 while the agent costs $0.55 per completed request, the queue-removed value is:

That is a stronger starting point than “we saved the team 12 minutes per ticket,” because it models the work as output, not effort.

Some workflows create value because they happen faster, not just cheaper.

Examples:

Cycle-time value is where autonomous agents often beat assistant tools by a wide margin. The agent does not wait for someone to become available.

Routine work does not stay cheap when it is wrong. Bad routing, duplicate entries, policy mistakes, missed follow-ups, or inconsistent support actions can create expensive downstream cleanup. If the workflow already has a measurable error pattern, price it.

What matters here is not whether agents are perfect. They are not. What matters is whether they are more consistent than the current process on the part of the workflow they actually own.

This is the least abused form of “hours saved.” Use it only when the business has a credible path to using recovered time for something with economic value: more pipeline coverage, fewer hires, faster releases, better account handling, reduced backlog, or higher-value casework.

Recovered hours without a plan are a morale benefit, not a finance line.

A clean evidence section is more useful than a benchmark table that overpromises. The data is consistent on one point: AI delivers real gains, but those gains depend on where it is applied and how the workflow is structured. Efficiency shows up early. Structural change does not.

The pattern is straightforward. Measurable gains exist, but they do not come from generic adoption. They come from selecting the right workflows and executing them with discipline.

Most agent deployments do not fail on the upside. They fail because the cost of making the system work was treated as incidental.

It is not incidental. It is the denominator.

| Workflow shape | Economic outlook | Why |

|---|---|---|

| High volume, clear rules, structured data, reversible actions | Strong now | Cheap to measure, cheap to supervise, easy to contain |

| High volume, moderate ambiguity, good system access | Promising with discipline | Real upside, but exception design matters |

| Low volume, high judgment, relationship-heavy work | Weak near-term | Human review erases most of the gain |

| Irreversible actions, unclear rules, high downstream risk | Narrow first or wait | Downside dominates until controls improve |

| Messy data, fragmented systems, no workflow owner | Bad candidate | The cleanup bill lands before the return does |

Most teams over-scope the first deployment. They start with ambition instead of a workflow that can be measured, contained, and proven. The first win is not broad. It is controlled.

AI already improves how quickly work gets done. The real shift happens when routine tasks no longer rely on a human queue. That is where cost structure changes, response time becomes execution-driven, and teams focus their effort on higher-value decisions.

When this shift is applied with the right workflows, the impact compounds. Clear definitions of “done,” measurable processes, and connected systems allow agents to complete work end to end while keeping humans in control of exceptions. This is how efficiency turns into real operating gain.

Platforms like YourGPT are built around this model. By helping teams define workflows, connect systems, and deploy agents across support, sales, and operations, the focus stays on outcomes rather than activity. The opportunity is not just faster work. It is building systems that finish the work reliably and scale with clarity.

Move beyond assumptions. YourGPT helps you model ROI, identify automation opportunities, and deploy agents that deliver measurable results.

TL;DR A Messenger AI agent helps businesses respond to Facebook Page DMs faster, answer customer questions using business data, and guide visitors toward the next step. With YourGPT AI for Messenger, businesses can enable 24/7 auto-replies, rich messages, multilingual support, human handoff, an omnichannel inbox, and no-code workflow automation. Facebook Page DMs are often where […]

Proactive AI is not a new category. It is just a shift in how you use the systems you already have. Instead of waiting for a customer to ask for help, you step in earlier, when the signal is there, but the request has not happened yet. Most teams are still reacting. A ticket comes […]

TL;DR An AI agent for gyms helps fitness businesses capture website leads, answer routine member questions, support trial bookings, guide class enquiries, and hand complex conversations to staff with context. The best setup uses approved business knowledge, clear escalation rules, CRM or workflow connections, and safe human handoff so gyms can reduce missed enquiries, improve […]

TL;DR Building a WooCommerce AI chatbot takes about 10 minutes and requires no coding. With YourGPT, you can train the chatbot on your store data, connect WooCommerce using REST API and webhooks, answer product and order questions, capture leads, support cart recovery, and extend the same AI assistant across your website, WhatsApp, Instagram, and other […]

TL;DR AI agents are becoming part of everyday business operations across customer support, sales, onboarding, and internal workflows. In customer support, they are commonly used to answer questions, automate billing support, track orders, handle repetitive requests, collect information, route conversations, and assist human agents with context and actions. Some platforms focus mainly on conversational replies, […]

TL;DR YourGPT and Asana work best together when conversations can turn into structured tasks without manual handoff between support, ops, or project teams. You can connect them through Asana MCP, YourGPT AI Studio, or viaSocket, depending on whether you need agentic control, custom workflow logic, or a fast no-code setup. Start simple: use one clear […]