AI Customer Support Trends Defining 2026

The most useful thing the 2026 AI support data tells you is also the thing most teams keep skipping.

AI is not spreading evenly across customer support. It is concentrating in the parts of the queue that are repetitive, rule-heavy, and expensive to keep routing through people. That is why the best public results come from order checks, account help, first-response triage, case summaries, and similar work that follows a known path.

That sounds obvious, but a lot of teams still buy as if fluency is the main event. It is not. The harder question is whether AI expands coverage, removes repetitive load, and stays out of the way when judgment is required.

AI in support has moved from answering questions to taking action. From reacting to problems to detecting them early. From a single chat widget to more consistent resolution across the channels customers actually use. The gap between the teams that have made that shift and the ones still running first-generation deployments is getting wider.

If you need raw benchmarks, see our full AI customer service statistics roundup. This article is the companion piece. It focuses on what those numbers mean for support teams in 2026, which assumptions they support, and which ones they do not.

The first shift is financial, not technical.

In a February 18, 2026 Gartner survey, 91% of customer service leaders said they were under pressure to implement AI in 2026. Once that pressure reaches the budget line, the conversation changes. Teams stop asking whether the bot sounds smart and start asking whether the queue moves faster, more tickets stay contained, and fewer agent hours disappear into repetitive work.

Budget behavior points in the same direction. Gartner reported in October 2025 that 75% of service and support leaders increased AI spending compared with the prior year.

That spend is not only going into tools. A separate December 2025 Gartner survey found that 42% of organizations are hiring specialized roles such as AI strategists, conversational AI designers, and automation analysts to manage those investments.

Most support teams do not have a chatbot problem. They have a queue problem.

Support AI creates value when it absorbs or shortens work that would otherwise consume human queue time:

In practice, that means fewer agents spending their morning clearing order-status tickets, fewer password-reset requests waiting for manual handling, and fewer support leads reading five-message threads only to send the same policy answer again.

That distinction matters because polished answers are cheap. Queue relief is not.

This is also where the label gets stretched. Plenty of vendors describe a polished answer layer as an AI agent. The more useful systems do something harder: they finish a narrow task cleanly, shorten handling time, and cut the admin burden that support teams have treated as normal for years.

The public case studies all point to the same kind of work.

In its February 27, 2024 announcement on its OpenAI-powered assistant, Klarna said the system handled 2.3 million customer conversations in its first month, did work equivalent to 700 full-time agents, reduced average resolution time from 11 minutes to under 2 minutes, and cut repeat inquiries by 25%. Klarna also projected a $40 million profit impact for 2024.

That example gets repeated so often that many teams take the wrong lesson from it. Klarna does not prove that support is heading toward full autonomy. It shows that AI works extremely well when the scope is narrow, the requests repeat, the rules are known, and the workflow can be contained with very little judgment.

Bank of America reinforces the same point from a different operating environment. In August 2025, the bank said Erica surpassed 3 billion client interactions, averaging more than 58 million interactions per month, with more than 98% of clients getting what they needed without escalation to a live agent. The company also said the system is used by more than 90% of internal employees and that internal AI deployments helped reduce IT service desk calls by more than 50%.

The lesson is plain enough: AI support performs best when the work is frequent, structured, and too expensive to keep routing through people by default. Your agents too need it. If you’re outsourcing your human part of the support team, let’s say through a third-party c suite executive assistant, you need to communicate these rules to them as well.

The scope now goes well beyond chatbots. In mature deployments, AI is usually handling four kinds of work:

One of the clearest 2026 shifts is that AI is changing the economics of service coverage, especially across languages and time zones.

CSA Research found that 76% of consumers prefer buying products with information in their own language, while 40% will not buy at all if that support is missing. For support teams, that is not just a localization point. It is a service expectation.

Klarna’s assistant was deployed across 23 markets and more than 35 languages from a single AI system. That level of reach used to require a much heavier regional support footprint.

Multilingual support now sits much closer to a baseline expectation than a premium service. Once customers get fast help in their own language from one provider, they expect it from the next.

One narrow way to evaluate AI is to look only at how many conversations it contains.

Some of the cleanest gains show up elsewhere. Support gets more efficient when the human who takes the case does not have to reconstruct the thread, hunt for the right rule, and write wrap-up notes from scratch afterward.

Agent-assist tooling matters for the same reason. Summaries, grounded response suggestions, live policy retrieval, and post-call automation all reduce drag inside the queue. For many teams, that is a faster win than customer-facing containment alone.

The useful frame for 2026 is not automation versus augmentation. It is where each belongs.

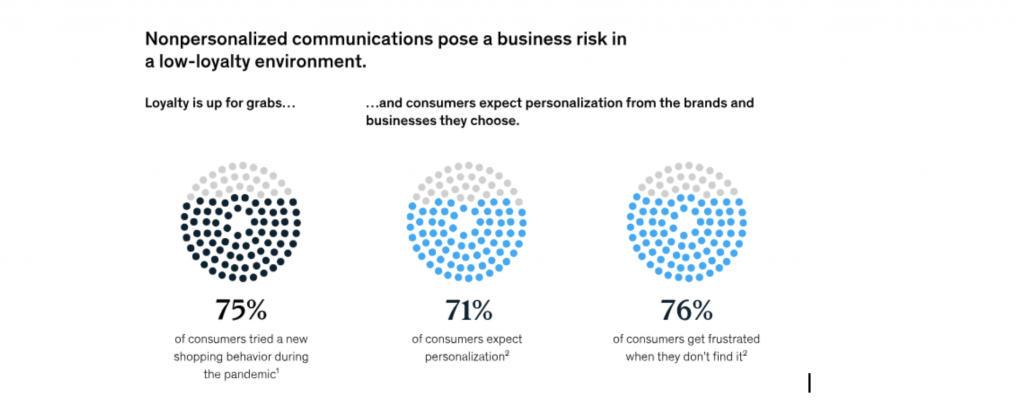

Speed gets attention first, but relevance is where the business impact shows up. McKinsey’s personalization research found that companies excelling at personalization generate 40% more revenue from those activities than slower-moving peers. The same research found that 76% of customers experience frustration when personalized touchpoints are absent.

That matters in support because personalization is not only a marketing problem. Customers increasingly expect the support layer to understand account context, prior interactions, and likely intent without making them restate everything from scratch.

The pattern also shows up in smaller operating environments, not only in headline case studies.

In our published AI customer service statistics roundup, interviews with founders pointed to the same practical gains first: faster first response, shorter resolution cycles, and less repetitive work sitting in the queue. The same page also notes that teams using clear AI-human collaboration models resolved issues faster than teams leaning on either side alone.

That matters because it lines up with the broader market evidence. The practical gains arrive first in queue speed, handoff quality, and repetitive workload reduction. They do not begin with full replacement.

The next phase of AI in support looks less like a better chatbot and more like a broader service layer. A few shifts matter more than the rest.

Polish is easy to notice. Queue health is easier to miss and far more important.

The better questions are:

Those are the measures that tell you whether AI is helping the support team or just looking good in a quarterly review.

The public data is clearer on this than most product messaging.

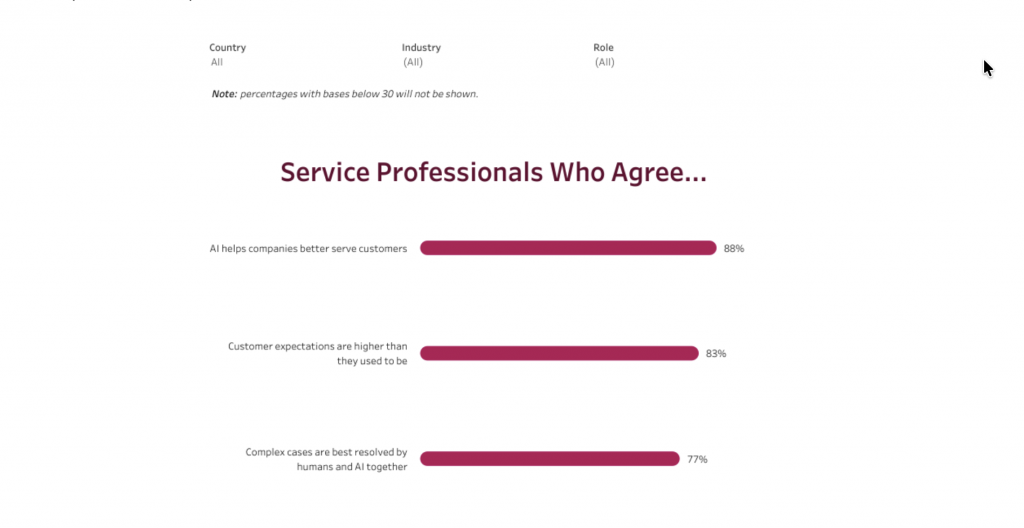

AI is reshaping support, but the public numbers do not support the claim that mature service organizations are rapidly removing the human layer or needed to remove it.

That is a more credible signal than the replacement story. They do not prove that most support teams are ready to automate complex, exception-heavy, or trust-sensitive work. The market is building hybrid service:

That model is less cinematic than the usual AI narrative. It is also the one most support teams can run without making service worse.

The main difference between average deployments and strong ones is how deliberately the queue gets redesigned.

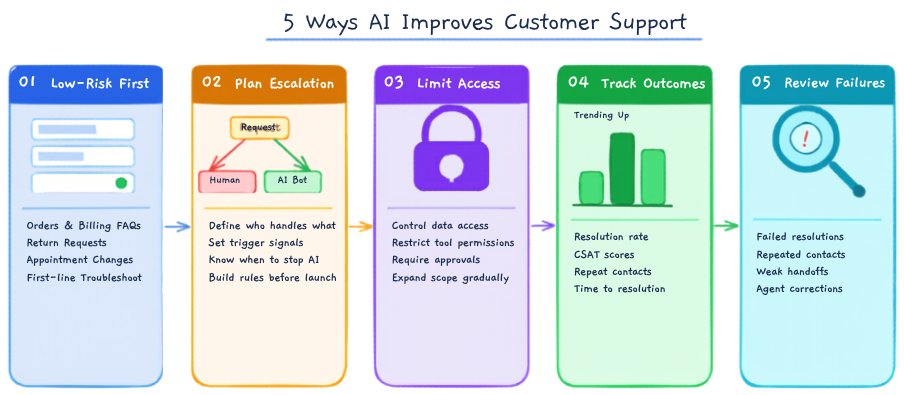

The teams getting the best results tend to follow the same pattern:

These are still the most practical ways to apply AI without making the support experience worse.

The teams getting the most from AI in 2026 are not the ones chasing the biggest automation number. They are the ones improving three things: coverage, compression, and control.

Coverage means helping customers across channels, languages, and hours. Compression means removing repetitive work from human teams. Control means keeping sensitive and complex cases with the right people.

That is the real standard. If AI expands coverage, reduces repeatable work, and improves handoffs to humans, it is creating value. If it only looks good in a demo, it is not.

The best support teams are not using AI everywhere. They are using it where it works best: repetitive, rules-based, time-sensitive tasks. That is where AI reduces queue pressure, speeds up responses, and gives agents more time for issues that need judgment.

So do not ask, “How much did we automate?” Ask, “Did support actually get better?” If queues are shorter, handoffs are smoother, and customers get faster help without losing trust, then AI is doing its job.

TL;DR AI agents are becoming part of everyday business operations across customer support, sales, onboarding, and internal workflows. In customer support, they are commonly used to answer questions, automate billing support, track orders, handle repetitive requests, collect information, route conversations, and assist human agents with context and actions. Some platforms focus mainly on conversational replies, […]

TL;DR YourGPT and Asana work best together when conversations can turn into structured tasks without manual handoff between support, ops, or project teams. You can connect them through Asana MCP, YourGPT AI Studio, or viaSocket, depending on whether you need agentic control, custom workflow logic, or a fast no-code setup. Start simple: use one clear […]

TL;DR Dental clinics often lose patients not due to treatment quality but because of slow or missed responses across calls, chats, and after-hours enquiries. AI agents help by responding instantly, collecting structured patient details, applying booking rules, and routing requests before they reach the front desk. Clinics that define clear workflows, set boundaries around clinical […]

TL;DR The best Shopify AI support agent is not defined by demos, but by how it performs under real customer scenarios with accurate, source-backed answers and clear boundaries. Reliable systems depend on strong knowledge grounding, retrieval of live store data, controlled permissions, and structured escalation, not just model quality or response fluency. Platforms like YourGPT […]

TL;DR AI improves speed, but real ROI appears when workflows no longer depend on a human queue and can be completed end to end. Autonomous agents shift cost structure by removing routine work from human flow, reducing cost per case, improving response time, and scaling capacity without linear hiring. Platforms like YourGPT help operationalize this […]

AI becomes far more useful when it can do more than answer questions. That is where autonomous AI agents stand apart. Instead of stopping at conversation, they can understand a goal, decide what needs to happen next, take action, and improve over time through real interactions. They are not fully independent. You still define the […]