AI for Customer Service in 2026: From Answering to Resolving

The industry has shifted from Deflection (steering users away) to Resolution (executing tasks and resolving). While legacy chatbots only provide information, Agentic AI like YourGPT integrates directly with business systems like Stripe, CRMs, and Logistics to autonomously close tickets. The new gold standard for CX success is no longer Response Time but First Contact Resolution and the ability to transition from reactive support to Proactive Problem Solving.

Leya AI runs over 1,000 customer support conversations every month. More than 800 of them close without a human involved, including full Stripe subscription cancellations, billing disputes, and account changes.

Not because the questions are easy. Because the system is connected to the right tools, trained on real business logic, and built to finish what it starts.

Thivanka De Silva, Founding Product Manager at Leya AI, shared what made the difference:

“We were able to move away from Intercom as it became too expensive. Once set up, YourGPT worked extremely well for us. After training it with accurate information, it genuinely helped automate customer service.”

That is what good AI support actually looks like. It does not just answer. It gets the job done.

This important even more now. According to Gartner, 91% of customer service leaders are under pressure to expand AI initiatives in 2026. The pressure is real. But most of the implementation is happening at the conversation layer (where AI responds) rather than the resolution layer, where it actually closes problems.

That is why so many AI support projects disappoint. The ambition is high, but the most system are only built to handle the conversation.

AI for customer service in 2026 means systems that take a request from first contact to a closed outcome. The defining capability is action: connecting to billing systems, processing cancellations, updating accounts, routing by intent, and confirming results. The conversation is the interface. The real work happens inside the systems behind it. AI agents built for resolution operate across those systems rather than sitting at the conversation layer alone.

The tools, the models, the interfaces: all of it has matured to where it is no longer the bottleneck.

What separates teams getting results from teams still configuring chatbot is how deeply their AI connects to where support work actually happens.

An AI agent with no connection to your order management system cannot process a return. It can only explain your return policy clearly, in multiple languages, with perfect tone but It cannot process the return. That distinction determines whether the customer leaves with their problem solved or picks up the phone.

Deflection rate became the standard KPI because it is easy to measure and easy to move.

You can push it up by adding FAQ coverage. You can push it up by making escalation harder to reach. You can push it up by having AI generate confident-sounding responses that technically address a question without actually doing anything about it. All of that looks like progress on a dashboard.

Resolution rate is harder to measure and much harder to fake. It requires knowing whether the customer’s actual need was met, and whether they had to contact you again about the same thing. If a customer returns within 48 hours about the same issue they supposedly resolved through your AI, the resolution failed.

The teams with the most mature AI deployments have moved away from deflection as a primary metric. They track first-contact resolution. They watch repeat contact rates. They measure CSAT across every interaction, not the 4% of customers who voluntarily fill out a post-chat survey.

What you measure shapes what you build. And most teams are building to a metric that rewards the wrong behavior.

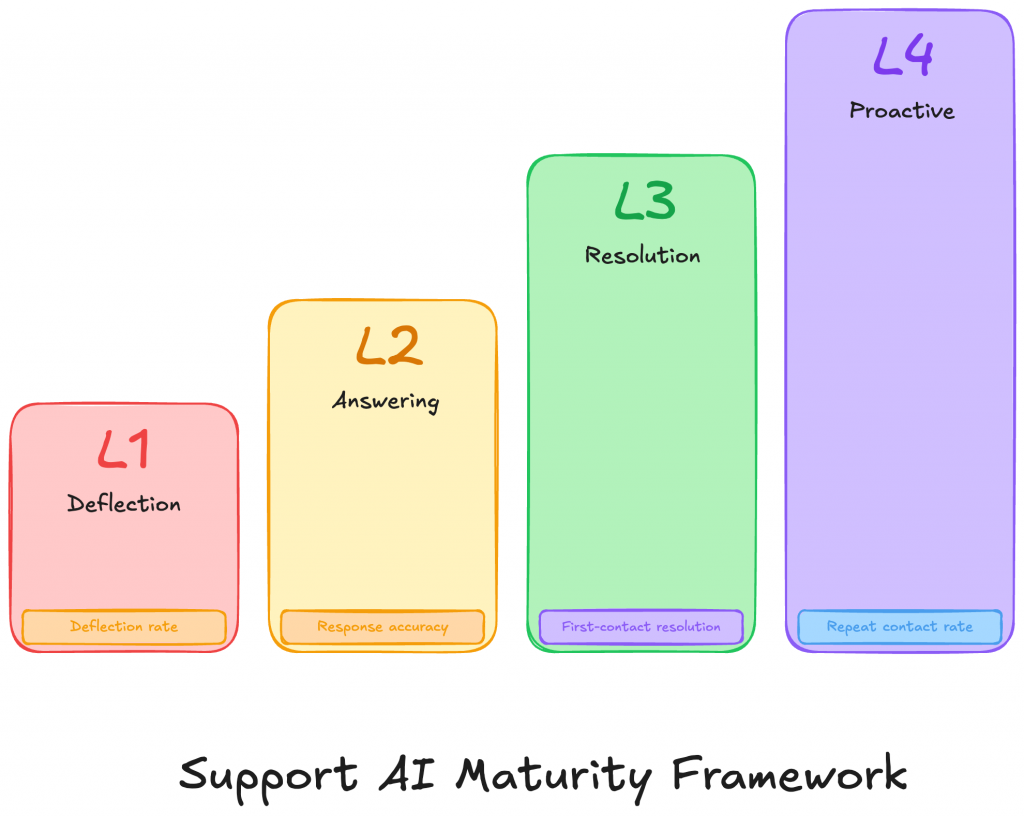

Most conversations about AI in customer service jump straight to what the technology can do. The more important question is simpler: what stage is the support team actually operating at today?

That is where most confusion starts.

A company may have an AI assistant live on its website, some automation in place, and rising deflection numbers on the dashboard. On paper, that can look like meaningful progress. However, the customer may still be getting the issue when remains unresolved.

This framework helps make that gap visible. It separates AI systems that reduce visible ticket volume from AI systems that actually reduce customer effort and close support work end to end.

Most companies deploy at L1 or L2 and measure as if they are at L3. The results disappoint because the architecture does not support the outcome.

The move from L2 to L3 is not a prompt upgrade. It is an integration and training problem. And the move from L3 to L4 requires live access to the data where problems first appear, before the customer sees them.

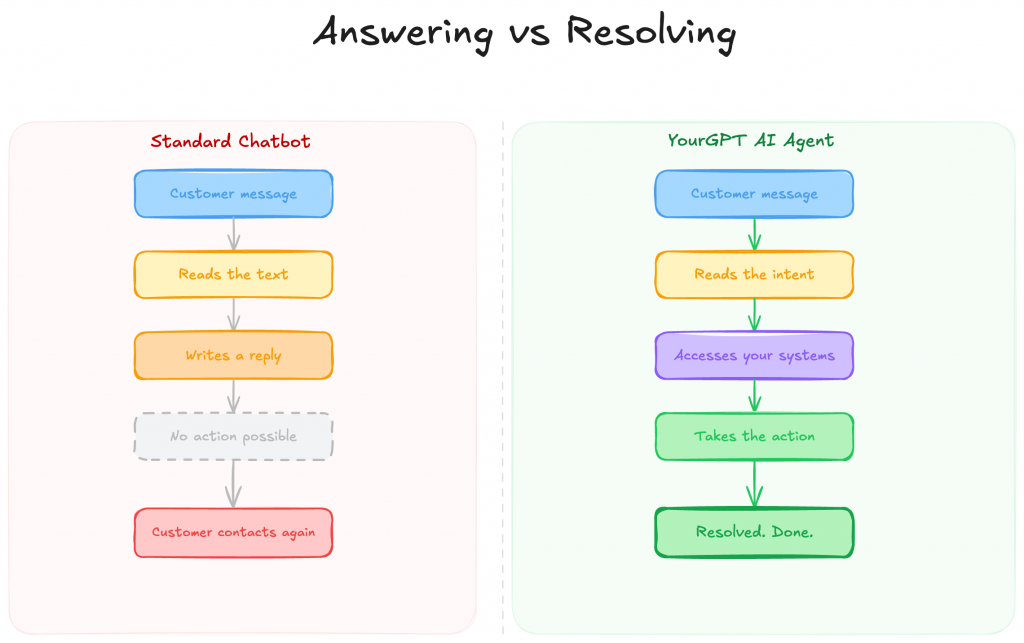

A standard chatbot works within the conversation window. It reads what the customer sends and generates a response. That response might be accurate and useful. But the chatbot itself cannot go anywhere outside that window or do anything inside your systems.

Agentic AI operates differently. It identifies what action is needed, connects to the relevant system, carries out the action, and reports back with something that actually happened, not just something that was said.

This is where YourGPT is built to operate. Not as a layer that sits in front of your support queue and generates responses, but as an agent that moves across your connected systems and closes requests. When a customer reports a billing error, the YourGPT agent checks the account, identifies the discrepancy, initiates the correction, and confirms the outcome, within the same conversation, without a human step.

Gartner projects that agentic AI will autonomously resolve 80% of common service issues without human involvement by 2029. Some teams are already there on specific workflows. The projection describes where the industry is heading. The leading implementations are already at that level on the workflows they have properly connected.

The knowledge layer matters here too. Retrieval-Augmented Generation (RAG) means the agent pulls from your actual knowledge base before generating any response, rather than drawing on general training data and estimating. Answers stay grounded in what your business actually says. Confidently wrong answers are one of the fastest ways to break customer trust. RAG is the architectural decision that prevents them.

There is no single model that performs equally well across every type of customer interaction.

A billing policy question requires precision above everything else. A customer who has had a genuinely bad experience requires something different: the ability to read emotional context and respond in a way that does not make things worse. A technical troubleshooting request needs deep product knowledge. Pushing all of these through the same model produces consistent mediocrity across the board.

YourGPT’s architecture handles this by routing different request types to configurations trained and calibrated for those tasks. From the customer’s perspective, the conversation is seamless. Behind it, the right approach is being applied to the right problem.

The other piece is making sure the AI works from verified information rather than general knowledge. This is why connecting to a well-structured knowledge base matters. When responses are grounded in your actual policies and documentation, accuracy holds as your product changes. Without that connection, the system is working from a snapshot of your business that gets stale the moment anything is updated.

Most support starts when a customer notices something has gone wrong and contacts you. By that point, the experience has already taken a hit.

Proactive customer service changes the sequence. The system identifies a problem forming in your operational data and reaches out before the customer has a reason to be frustrated. A payment fails on renewal day, and the AI sends a resolution prompt before the account locks. A delivery misses its window, and the customer receives options before they have had time to wonder what happened.

The requirement is live access to operational data. An AI working from a static knowledge base has no visibility into what is happening in your billing system. An AI connected to real operations can act before the customer has to.

HealthBird, a platform that helps users find and enroll in health insurance, used this capability to support international expansion into markets like Ecuador. The support challenge was not just volume. It was serving customers across languages through one of the more genuinely complex product categories that exists. With AI handling the volume and human agents handling cases requiring real judgment, HealthBird saw 25% user base growth directly tied to having support infrastructure that made expansion viable. Good support infrastructure made growth possible, not just cheaper.

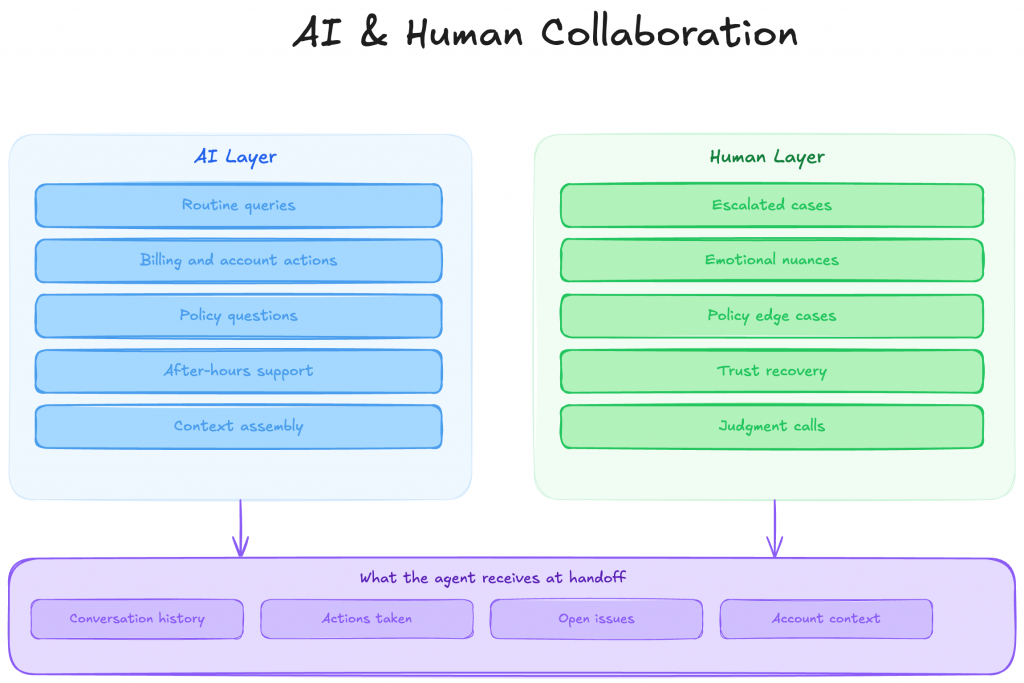

The question of AI versus human agents produces the wrong conversation.

The teams running the highest resolution rates are not choosing one over the other. They are running AI on the work where consistency and speed matter, and keeping humans on the work where reading the situation matters.

AI handles volume, transactions, policy questions, and anything with a clear correct answer. Human agents handle escalated cases, emotionally complex situations, and anything that falls outside normal parameters, where the relationship needs attention, not just the ticket.

What makes this work is the quality of the handoff. When a human agent joins a conversation that AI has been handling, they should have full context already assembled: what the customer said, what actions have been taken, what remains open. The agent’s job is to close the case, not catch up on it.

SKNANB, a Caribbean bank with over 50 years of operation, runs this model in financial services, one of the more demanding environments for AI because the cost of a wrong answer is real. Their AI handles banking queries within defined policy boundaries, consistently, at any hour. Human agents take the cases requiring judgment. Results after deploying with YourGPT: 85% first-contact resolution, 60% reduction in response time, 40% improvement in customer satisfaction scores.

What made it viable in a regulated context is that the AI works within explicit limits. It handles what it is trained on and escalates cleanly at the edge. No improvising. That predictability is what makes it appropriate in financial services, and the same principle holds anywhere wrong answers carry real consequences.

Most teams use sentiment analysis as a reporting layer. A conversation ends, the transcript is scored, results appear in the weekly dashboard.

The useful application is during the conversation, while something can still be done.

When the AI detects that a customer’s tone has been getting steadily more frustrated over several messages, that is the moment to bring a human in before the customer reaches the point of demanding a manager or leaving a negative review. A senior agent steps in with the full context already assembled, before the situation escalates further.

At scale, this real-time signal does something else as well. If a specific billing step or product issue is generating consistent frustration across a large number of conversations, that pattern surfaces in the data well before it appears in your churn numbers. This is where CRM integration matters: when conversation sentiment and outcomes flow into your CRM automatically, the product team and the support team are working from the same picture of what customers are actually experiencing.

A significant portion of support friction comes from one specific requirement: customers have to translate a visual or physical problem into words precise enough for a system to understand.

A damaged product is obvious in a photo. Describing the specific damage in text, in a way a support flow can interpret correctly, takes effort and often loses something in the translation.

Omnichannel support in 2026 goes beyond channel availability. It means accepting multiple input types: photos, voice messages, documents, screen recordings, within the same conversation. The customer communicates the way they naturally would. The system works from what they actually provided, not from a text description of it.

This removes one of the most stubborn sources of friction in support interactions. It is not a feature addition. It is a genuine improvement to the experience.

Post-interaction surveys return responses from 3 to 7% of customers on average.

Decisions about support quality, AI performance, and agent effectiveness are being made from a sample that small, which skews toward the very satisfied and the very frustrated. The majority of customers, those who had an ordinary experience, mostly do not fill out surveys.

AI-based measurement evaluates every conversation. Resolution rates, sentiment trends, escalation frequency, repeat contact rates: all of it runs across the complete dataset. Problems become visible in days rather than quarters. If frustrated conversations are clustering around a specific workflow step, you see the pattern before it shows up in churn. If a question type is escalating at an unusual rate, you know exactly where to look.

A well-maintained knowledge base connected to this measurement layer improves over time automatically. The system learns from every interaction. Accuracy builds through use, not just through manual updates.

Answering means the AI provides information in response to a question. Resolving means the AI takes action to fix the issue. For example, explaining how to cancel a subscription is answering, while actually processing the cancellation and confirming it is resolving. Resolution requires system integration, while answering only requires knowledge.

Most failures come from generic training and lack of system integration. AI trained on broad data lacks business-specific accuracy, and without access to billing or order systems, it cannot take action. These issues stem from poor setup rather than limitations of AI itself.

Yes. AI operates within clearly defined rules, handles tasks within its scope, and escalates when needed. With proper training and strict boundaries, it can perform effectively. Some implementations achieve up to 85% first-contact resolution in financial services.

Focus on cost per issue resolved, not cost per conversation. If AI resolves more issues without increasing costs significantly as volume grows, it is effective. Always evaluate pricing at current and future scale to ensure long-term savings.

Proactive service means AI detects issues in real-time data and reaches out before the customer does. Examples include failed payments or missed deliveries. This requires direct integration with operational systems, not just a knowledge base.

Basic resolution capabilities typically take 2 to 4 weeks. This includes connecting one system, training on policies, and testing workflows. Simpler use cases launch faster, while complex integrations take longer.

AI can handle routine, rule-based tasks like cancellations, refunds, password resets, billing issues, account updates, and returns. Complex or sensitive cases requiring judgment are better handled by human agents.

AI improves by learning from interactions. It refines policy understanding, detects patterns, and reduces escalation rates. With strong system connections and data feedback, it becomes more accurate and efficient over time.

Teams reaching 80%+ resolution rates did not get there by overhauling their entire support operation.

They identified one workflow where a specific action was possible. Connected it properly. Trained it on real business rules. Measured whether the problem closed, not whether the conversation ended.

Using the maturity framework above: if your team is at L1 or L2, the move to L3 starts with one workflow, one backend connection, and training on the actual policies that govern it. Not ten workflows. One, done properly.

From there, each additional workflow is faster because the model is proven and the integration pattern is understood. Resolution rates improve. Repeat contact rates fall. Agent time moves toward the cases that actually need human judgment.

The teams still optimizing deflection rate will keep getting better at handling conversations. The teams building for resolution will close more of them. By the end of 2026, that difference will be difficult to ignore.

TL;DR AI agents are becoming part of everyday business operations across customer support, sales, onboarding, and internal workflows. In customer support, they are commonly used to answer questions, automate billing support, track orders, handle repetitive requests, collect information, route conversations, and assist human agents with context and actions. Some platforms focus mainly on conversational replies, […]

TL;DR YourGPT and Asana work best together when conversations can turn into structured tasks without manual handoff between support, ops, or project teams. You can connect them through Asana MCP, YourGPT AI Studio, or viaSocket, depending on whether you need agentic control, custom workflow logic, or a fast no-code setup. Start simple: use one clear […]

TL;DR Dental clinics often lose patients not due to treatment quality but because of slow or missed responses across calls, chats, and after-hours enquiries. AI agents help by responding instantly, collecting structured patient details, applying booking rules, and routing requests before they reach the front desk. Clinics that define clear workflows, set boundaries around clinical […]

TL;DR The best Shopify AI support agent is not defined by demos, but by how it performs under real customer scenarios with accurate, source-backed answers and clear boundaries. Reliable systems depend on strong knowledge grounding, retrieval of live store data, controlled permissions, and structured escalation, not just model quality or response fluency. Platforms like YourGPT […]

TL;DR AI improves speed, but real ROI appears when workflows no longer depend on a human queue and can be completed end to end. Autonomous agents shift cost structure by removing routine work from human flow, reducing cost per case, improving response time, and scaling capacity without linear hiring. Platforms like YourGPT help operationalize this […]

AI becomes far more useful when it can do more than answer questions. That is where autonomous AI agents stand apart. Instead of stopping at conversation, they can understand a goal, decide what needs to happen next, take action, and improve over time through real interactions. They are not fully independent. You still define the […]