7 AI Skills Creators Can Turn Into Courses

This guide covers 7 AI course ideas creators and online instructors can turn into practical, high-value courses. Topics like AI agents, RAG, context engineering, MCP, and AI workflows stand out because they connect to real use cases and skills people want to learn right now.

Creating content consistently sounds simple until you have to decide what to make next.

Finding fresh ideas is often the hardest part of the process. A strong piece of content usually starts with a strong direction, and that is exactly what most creators need more of.

In this blog, we are sharing 7 practical ideas creators can use to find that direction faster. Each one gives you a useful starting point that you can adapt to your niche, your style, and the audience you want to reach.

These course ideas focus on practical AI skills that people can use in real work. Each one connects to current demand and offers clear opportunities for creators to build valuable, monetizable content.

One of the most valuable AI topics right now is building agents that can actually do real work.

People have already seen what basic agents look like. What they want now is a better understanding of how agents are designed for real environments, how they use tools, how they manage context, and how they stay reliable when tasks become more complex.

That makes this a valuable direction for creators. It helps move the conversation beyond prototypes and into something more practical and aligned with how AI is actually being used today.

A course or content series here can help your audience understand agents that support business operations, automate workflows, or act as useful personal assistants. It is a practical topic because it connects directly to what companies and builders are trying to ship right now.

What you can teach:

A main reason this topic stands out is its focus on systems that take action, not just generate responses. It shifts attention from answering questions to actually completing tasks.

That difference makes it practical in real scenarios. It helps people see how AI can automate work, not just assist with it.

Prompt engineering has gained popularity, but context engineering is essential for ensuring the reliability of AI systems.

The difference matters. Prompting is about asking well. Context engineering is about deciding what the model should actually see and use, including instructions, retrieved documents, examples, memory, tools, and output structure. Anthropic now frames the subject as a core discipline for building effective agents, especially when consistency matters more than one good response.

That makes the issue a valuable topic for creators because the audience is moving past surface-level prompt tips. People want to understand why the same model performs well in one workflow but fails in another, and how better structure improves reliability.

A course or content series on this topic can help explain how teams design context on purpose instead of relying on ad hoc prompts. It also opens the door to a second high-value topic: creating reusable skills for AI. Instead of rewriting the same instructions again and again, teams are increasingly packaging repeatable behaviors into reusable skill layers. Anthropic’s Claude Skills and OpenAI’s ChatGPT Skills both reflect that shift toward structured, reusable customization.

What you can teach:

Why this topic works in 2026

Better results do not come from better models alone. They come from better structure around the model. Understanding that shift helps your audience build systems that are more consistent, predictable, and useful in real use cases.

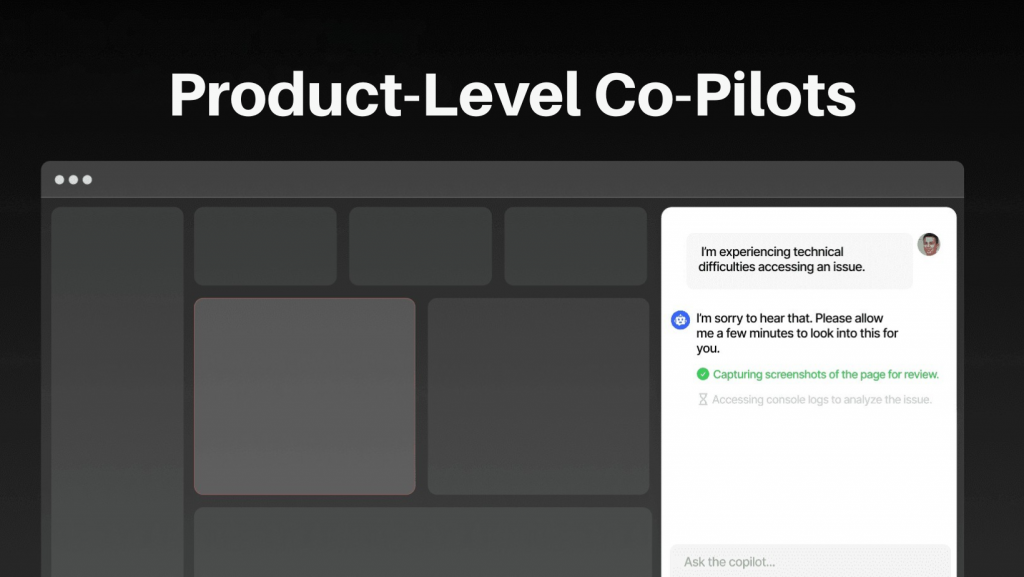

AI is becoming a core part of modern software products.

More products are building AI directly into their interfaces to make the customer experience smoother, reduce friction, and help people complete tasks faster without switching tools.

Industry reporting now frames the change as a broader shift toward AI-centric software, where AI is becoming part of the product itself rather than a separate add-on.

This is where copilots become valuable.

A well-designed copilot can help users find information, trigger actions, answer questions, and move through workflows in a way that feels natural inside the product. For creators, that makes this a strong topic to teach because the audience is not just curious about AI anymore. They want to understand how AI features are actually built into real software products.

A course or content series on this topic can show people how AI is embedded into product interfaces, how it works with application data, and how teams design these experiences safely. You are not just teaching prompts or chatbots. You are teaching how modern software is evolving.

What makes this topic even stronger is that creators can connect it to real tools builders can use today. For example, the open-source YourGPT Copilot SDK gives developers a practical way to embed copilots into products, which makes the topic feel more real, more actionable, and easier for the audience to connect with.

What you can teach:

This direction helps your audience understand how modern products are being built and how AI fits directly into everyday workflows.

Retrieval-Augmented Generation is widely used for building AI systems on private data. The goal is simple. Get accurate answers from internal documents, support data, and business systems without relying only on model knowledge.

You might have used keyword search to find a document, but now with rag you can get the answer directly without having to read the documents.

Adoption reflects this shift. RAG is used in around 78% of enterprise AI deployments, which shows how common it has become.

This is where most teams run into problems. A basic setup works in demos but fails in production. Systems retrieve content that looks relevant but is wrong, context gets lost during chunking, and models ignore retrieved data or give confident but incorrect answers. These are common issues, not edge cases.

That makes this a valuable direction for creators. People want to understand why systems fail and how to fix them. RAG connects directly to real use cases such as internal knowledge tools, support automation, and documentation search, where accuracy matters.

A course or content series here can help people move from basic pipelines to systems that work in production. It shows how retrieval design affects results, how to improve accuracy, and how to measure performance properly.

What makes this even more useful is that it connects to real tools teams use today. Platforms like YourGPT apply RAG to power AI agents that answer questions from internal knowledge bases, support content, and business data, which makes the concept easier to understand and apply.

What you can teach:

This works well because it focuses on a real problem companies are solving and shows how to build systems that actually work.

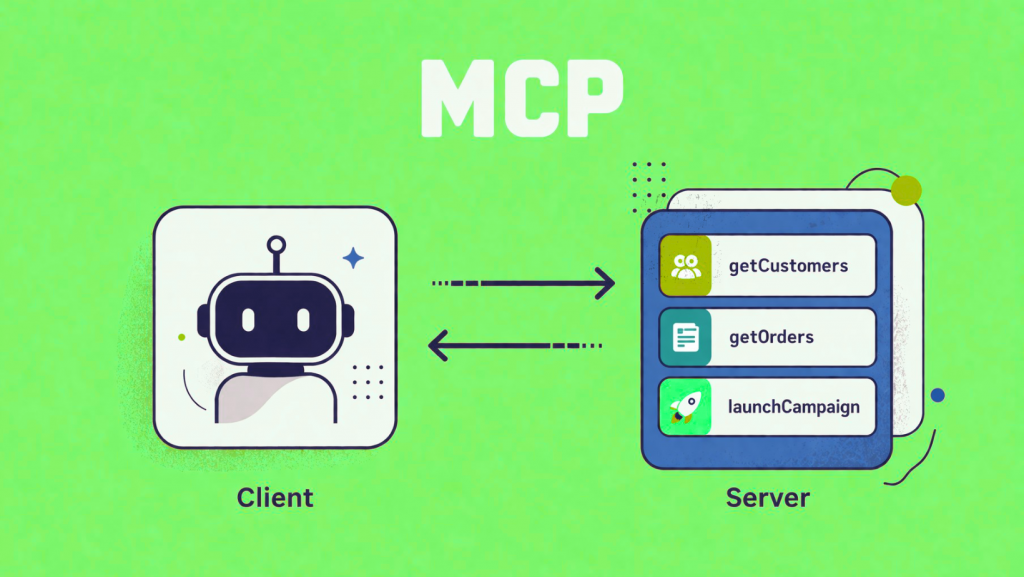

A lot of AI content still stops at prompting. AI becomes far more useful when it can interact with external tools, APIs, and business systems in a controlled way. That is why MCP will be such a relevant topic in 2026.

For creators, this area is valuable because it sits at the point where curiosity turns into real implementation. People are no longer only asking how to use AI. They want to understand how AI tools actually connect to CRMs, databases, internal workflows, and external services.

A course or content series on MCP can help explain that layer clearly. You can show your audience how MCP works, why it matters, and how to use it to build systems that are more practical than simple chat interfaces. That makes the topic useful for developers, product teams, and technical builders who want to go beyond surface-level AI content.

What makes this idea especially strong is that it is both timely and practical. It gives your audience a way to understand a fast-growing part of AI infrastructure while also learning something they can apply in real projects.

What you can teach in this topic:

This is the best topic for creators who want to publish content that feels more advanced, more relevant, and closer to how real AI products are being built.

Content creation is already using AI. The real advantage now comes from how that process is structured. Instead of relying on one-off prompts, creators and teams are building repeatable workflows for research, outlining, drafting, repurposing, and review. This shift helps content move faster without losing quality.

That change is important because the teams and creators who get the best results aren’t using AI in random, one-off ways. They are making systems for research, outlining, drafting, repurposing, and review that can be used over and over again.

McKinsey’s 2025 State of AI report shows that organizations getting more value from AI are more likely to have defined processes around how outputs are reviewed and validated, which makes the issue a strong systems topic.

Content creation remains one of the top enterprise use cases for generative AI, according to Wharton’s 2025 AI Adoption Report, making the topic commercially relevant and practical.

A course here could show creators how to turn one idea or one research brief into multiple outputs across formats, while still keeping human judgment where it matters most.

What you can teach:

AI is already part of daily work for most marketers. The gap is not access, it is workflow design. SurveyMonkey reports that 88% of marketers use AI regularly, which makes the next step clear.

This direction works well because it focuses on building systems that help creators produce consistent, high-quality content without increasing effort.

Customer support is one of the most adopted AI use cases.

More companies are using AI to handle first-response support, resolve repetitive questions faster, and stay available across channels without growing support teams at the same pace. Customer expectations are moving in the same direction.

It is easy for people to understand, tied to a real business problem, and directly connected to how companies are adopting AI right now. Gartner reported in February 2026 that 91% of customer service leaders are under pressure from executives to implement AI, which shows how urgent this shift has become for support teams.

A course or content series on this topic can help your audience understand how AI support systems are actually designed, not just how chatbots look on the surface. That makes it useful for creators who want to teach something practical, commercially relevant, and close to real implementation.

What you can teach:

– how AI support systems handle FAQs, routing, and repetitive queries

– when to use AI replies, when to escalate, and how handoff should work

– how support agents use knowledge bases, workflows, and customer context

– how to design guardrails, approvals, and auditability for support actions

– how to evaluate the system performance.

Connecting this topic to real tools enhances its strength. For production use cases, YourGPT is a perfect fit because it helps teams build AI agents for customer support, sales, and workflow automation in a way that feels directly relevant to what companies are trying to implement today.

LLM fine-tuning is currently the highest-paid AI engineering specialty, with professionals earning roughly 25–40% more than generalist ML engineers. AI engineers focused on large language models average around $206,000 across the industry. Agentic AI architecture and Retrieval-Augmented Generation (RAG) system design are the next highest-premium areas because enterprise investment is heavily concentrated in these technologies.

Yes. Retrieval-Augmented Generation (RAG) is largely a software engineering problem rather than a deep machine learning one. It focuses on document ingestion pipelines, vector search systems, prompt construction, and reliability engineering. Developers typically need Python knowledge and familiarity with REST APIs. Core ML ideas such as embeddings and semantic similarity can be learned alongside implementation.

A chatbot simply generates a response to a prompt and stops. An AI agent, however, can create a plan, execute actions such as searching data, calling APIs, writing files, or triggering other agents, then evaluate results and iterate until the task is complete. Because agents can take actions, they require more careful engineering around safety, error handling, and failure management.

Developers with solid Python skills typically need about 3–6 weeks of focused learning while building a real project. The basic mechanics can be understood in a few days, but production details such as chunking strategies, reranking, evaluation methods, and handling retrieval failures require more time and experimentation.

Prompt engineering focuses on crafting instructions for a single interaction with a model. Context engineering is broader and involves designing the entire information environment a model receives, including retrieved documents, conversation history, constraints, schemas, and fallback instructions. It is an architectural discipline that strongly affects system reliability.

Most courses assume intermediate Python skills and familiarity with API usage. Fine-tuning courses typically require machine learning fundamentals. Advanced topics like multi-agent systems or Model Context Protocol integrations usually assume experience with single-agent AI applications.

Agentic AI describes systems capable of autonomously executing sequences of actions to accomplish goals. Unlike traditional LLM interactions that end after a single response, agents can plan tasks, call tools, evaluate intermediate outputs, and continue iterating until completion. Enterprise surveys indicate that nearly 88% of organizations are increasing budgets for agentic systems in 2026, making it one of the fastest-growing AI investment areas.

The Model Context Protocol (MCP) is an open standard that defines how AI models interact with external tools and APIs. Instead of building custom integrations for every tool, MCP standardizes the interface. An AI model sends structured requests to an MCP server, which executes the tool call and returns formatted results. This approach makes integrations portable, auditable, and significantly faster to implement.

Courses that teach skills actively requested in job postings provide the strongest career impact. The most valuable areas include RAG system design, AI agent architecture, LLM fine-tuning with LoRA and QLoRA, MLOps deployment, and context engineering. Programs that end with deployed production projects tend to carry more weight with hiring teams.

AI is creating a new kind of opportunity for creators and online instructors. People are not only interested in using AI tools. They want to learn skills they can apply in real work, real products, and real workflows.

That is what makes these course ideas so valuable. Topics like AI agents, context engineering, MCP, and AI-powered operations give creators a chance to teach something timely, useful, and easy to connect to real outcomes.

For creators, this is a practical way to build courses that feel relevant and worth paying for. When a course helps learners understand how AI works in real settings and how they can use it in their own projects, it becomes much more meaningful.

If your course helps someone do something better, faster, or more confidently, it will be much easier for them to remember and recommend. That is what makes these ideas worth teaching.

TL;DR The best Shopify AI support agent is not defined by demos, but by how it performs under real customer scenarios with accurate, source-backed answers and clear boundaries. Reliable systems depend on strong knowledge grounding, retrieval of live store data, controlled permissions, and structured escalation, not just model quality or response fluency. Platforms like YourGPT […]

TL;DR AI improves speed, but real ROI appears when workflows no longer depend on a human queue and can be completed end to end. Autonomous agents shift cost structure by removing routine work from human flow, reducing cost per case, improving response time, and scaling capacity without linear hiring. Platforms like YourGPT help operationalize this […]

AI becomes far more useful when it can do more than answer questions. That is where autonomous AI agents stand apart. Instead of stopping at conversation, they can understand a goal, decide what needs to happen next, take action, and improve over time through real interactions. They are not fully independent. You still define the […]

Every AI agent looks impressive in a demo. The real test begins after launch. Within days, things can go wrong. The agent may give incorrect policy information, trigger unintended actions, or rely on outdated data. These are not edge cases. They are common failure patterns in real deployments. There is a clear gap between adoption […]

Managing email communication effectively is an important part of running a WooCommerce store in 2026. The right email tools help store owners automate notifications, segment customer lists, track engagement, and maintain reliable communication with shoppers. These tools support key functions such as order confirmations, abandoned cart reminders, welcome messages, and post-purchase updates. This blog reviews […]

A lot of outreach today already runs on AI. Emails are easier to send than ever. Email is easy to scale, but harder to land. Inboxes are crowded, response rates are uneven, and even good messages are easy to ignore. Phone is different. It creates an immediate interaction. With voice agents, you can now run […]